CoSTAR Network InfrastructureAI Compute Facilities

An outline and mapping of our cross network resources towards developing state-of-the-art AI compute facilities. Driving innovation in creative technology and beyond.

This infrastructure is purpose-built to support training and prototyping of advanced AI systems—such as multimodal generative models for the creative industries—while also enabling research and development across academia, startups, and industry partners.

Our pilot cluster is already operational, offering:

- 3 high-performance servers, each hosting 4 or 8 GPUs and up to 2TB of system memory

- 20 NVIDIA H200 GPUs, each with 141GB of next-generation high-bandwidth memory

- Ultra-fast NVMe storage, optimised for AI workloads

In May 2026, we will expand to a full-scale cluster, delivering:

- 10 additional 8 way GPU servers, each designed for high-demand AI workloads

- 80 additional NVIDIA H200 GPUs bringing the system to more than 14TB of total GPU memory

- High-speed interconnects (400Gb InfiniBand) for large-scale, multi-node training

- 750TB of ultra-fast NVMe storage

- Server equipped with 2× NVIDIA H100 GPUs (160 GB VRAM combined, 80GB each), 386 GB RAM, 19TB of NVMe storage, and AMD EPYC 9334 processors with 128 cores.

- Server equipped with Titan RTX 12GB VRAM and 2x NVIDIA 4080 GPUs with 8 GB VRAM each and 64 GB RAM

- Server equipped with 2x nvlinked RTX 6000 ADA with 48GB VRAM (96GB combined)

Workstation — Dell Precision Tower 7960

- NVIDIA RTX 6000 Ada Blackwell, 48GB

- 128GB RAM

- Intel Xeon CPU, 12 cores

- 2TB NVMe storage

- 16TB mechanical storage

Control and Render Node — AMD Ryzen Threadripper PRO 7975WX

- 32 cores

- 256GB RAM

- NVIDIA RTX 6000 Ada, 48GB

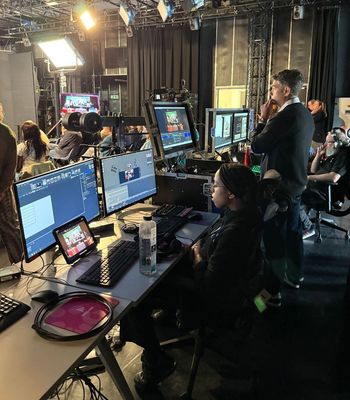

LED Volume Processing

- 2× Brompton Tessera SX40

Standalone PCs — 10× Dell Alienware

- NVIDIA RTX 4090

- Intel Core i9, 28 cores

- 64GB RAM